Everyone’s Using AI. Almost Nobody’s Using It Institutionally.

A pattern worth naming, from the field.

This is not a research report. It is an observation, the kind that accumulates when you spend enough time working alongside organizations that are genuinely trying to figure out what to do with AI. What follows is a pattern we keep encountering across sectors and organization types, and it is worth naming clearly.

Most organizations have “deployed AI.” In practice, that usually means people are using chatbots: drafting emails, summarizing documents, answering questions. That is real value, but it is individual value. When the session closes, it is gone. The organization is no smarter than it was yesterday.

There Are Three Tiers. Most Organizations Are Parked at the First.

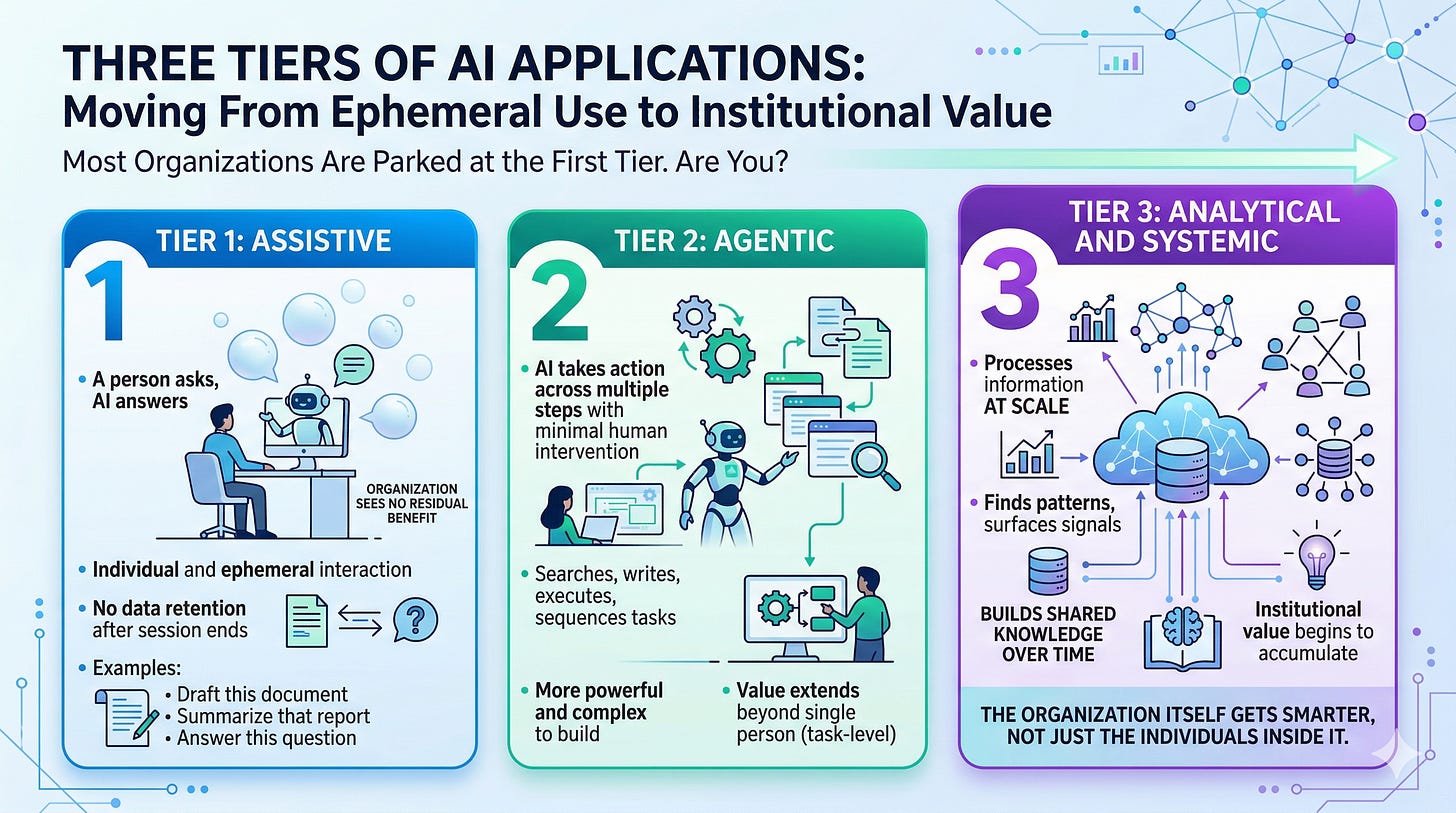

A useful way to cut through the noise around AI is to ask not whether your organization is “using AI,” but which tier of AI application is actually in use. There are three, and they are not equal.

Tier 1: Assistive. A person asks, AI answers. Draft this document. Summarize that report. Answer this question. The interaction is individual and ephemeral. When the session ends, nothing is retained. The organization sees no residual benefit from any of it.

Tier 2: Agentic. AI that takes action across multiple steps with minimal human intervention. It searches, writes, executes, and sequences tasks. More powerful, more complex to build responsibly, and increasingly accessible to technically capable individuals. Value can extend beyond a single person, but it is still largely task-level rather than organizational.

Tier 3: Analytical and Systemic. AI that processes information at scale to find patterns, surface signals, and build shared knowledge over time. This is where institutional value begins to accumulate. The organization itself gets smarter, not just the individuals inside it.

Consider what becomes possible when an organization stops treating after-action reports, project retrospectives, and decision logs as documents to be filed and starts treating them as signal to be processed. Over time, patterns emerge that no individual manager could see: which conditions consistently precede failure, which team configurations outperform, where decisions made at one level are quietly undermining outcomes at another. That is Tier 3 in practice.

It is worth being direct about what that requires: Tier 3 is not primarily an AI problem. It is a data architecture problem. The AI is only as useful as the structure, consistency, and governance of what feeds it.

Most organizations are operating almost exclusively at Tier 1, and that is worth pausing on. The productivity gains are real, but they are local. They do not aggregate, and they do not feed institutional memory. A collection of individuals getting slightly faster at their work is not the same thing as an organization developing better judgment over time. There is a meaningful difference between those two outcomes, and most AI rollout plans do not acknowledge it.

Agentic AI Is a Different Animal

The jump from chatbot to agent is not incremental. It is a capability class change. Agents act; they do not just answer. That power is real, but so is the surface area for failure. And what we are seeing in practice is that the organizations most enthusiastic about AI have quietly developed a new problem: every above-average, technically capable person is building their own agents, without coordination, without shared architecture, and without any real oversight.

The result is redundancy at best and compounding risk at worst. No shared architecture, no audit trail, no clear ownership. When something goes wrong, and with agents operating across multiple systems and steps, something eventually will, there is often no map of what was built, by whom, or what it touches.

The Skill Gap Nobody Is Admitting

Effective agent development requires more than prompt writing. It requires coding intuition and an understanding of logic architecture: how systems sequence, how errors propagate, and where handoffs break down. That is not a broadly distributed skill set, even in organizations full of smart, capable people.

What we are watching is a growing frustration among people who are genuinely trying to engage with this technology and running into walls they were not told existed. That frustration is made considerably worse by a vocal minority who have succeeded and who are, perhaps unintentionally, making it sound trivial. The gap between “this changed my workflow” and “this is easy” is wider than it appears from the outside, and the people stuck in the middle of it know exactly what we are describing. This is a morale and credibility problem, not just a training gap.

The Governance Vacuum

Speed of adoption has outpaced any policy or oversight framework. That is not unique to any particular sector; it is a systemic feature of how AI is rolling out everywhere. The technology moves faster than the institutions responsible for governing it, and most organizations have defaulted to something close to permissive inaction while waiting for someone else to define the rules.

For organizations operating in high-stakes environments, that posture carries real risk. The “move fast and find out” approach to AI governance has a different cost profile when the systems in question affect critical decisions, sensitive information, or operational outcomes that cannot easily be walked back. The absence of a framework is itself a choice, and it is one with consequences.

The Pattern Is Familiar

None of this is an argument against AI adoption. It is an argument that deploying tools without a framework for what those tools are supposed to build toward is a pattern organizations keep repeating. The dashboard problem, reaching for the visible surface without investing in the underlying system, is one version of it. The ungoverned agent proliferation problem is another.

The question worth asking is not whether your organization is using AI. It almost certainly is. The more useful question is which tier, toward what end, governed by what framework, and building toward what kind of institutional capacity. Those are harder questions than “has AI been deployed,” but they are the ones that actually matter.

The organizations that will come out ahead are not necessarily the ones moving fastest right now. They are the ones that are ripening into this, building the judgment, structure, and governance capacity to use these tools in ways that actually make the institution smarter over time. AI layered on top of broken systems does not transform them. It accelerates them.

There are organizations thinking seriously about this. Jack Dorsey and Roelof Botha recently published a piece laying out how Block is attempting to replace what hierarchy does with an AI-driven intelligence layer rather than simply augmenting individuals. It is worth reading carefully. It is also worth noting what made that ambition possible: a remote-first culture where work is already machine-readable, and a proprietary transaction data signal that compounds daily. The vision is instructive, but the conditions enabling it are not universally replicable. Most organizations will need to find their own path to Tier 3 from wherever they actually are.

For most organizations, the honest starting point is not a Tier 3 strategy. It is an audit of whether the conditions for one exist. Is work being captured in ways that are consistent and machine-readable? Is there a shared data model, or a fragmented one? Is anyone responsible for institutional memory, or does it walk out the door when people leave? Those questions come before the technology.

We are curious what others are seeing. Are your organizations handling this differently? Is anyone operating with a coherent plan across all three tiers? We would genuinely like to know.

If these questions are live in your organization, Storm King Analytics would be glad to continue the conversation.